Interpreting the originality reports that are generated from Turnitin is an art, and requires some insight into the subject matter, the nature of the assessment task that was set, and the usual writing style and language skills of the student.

There are two key issues:

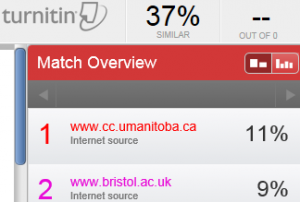

- There is no ‘magic number’, i.e. although the similarity score is colour coded red-amber-blue-green, there is no magic threshold below which we can assume that all is in order, or above which we can suspect that plagiarism may have occurred. For various reasons, it is possible for an essay to yield a fairly high score and be simply in need of remediation, or conversely to yield a low score with text matches artificially ‘engineered out’.

- No matter what the Turnitin score shows, the software is simply a text matching tool, and not a ‘plagiarism detection’ device. Expert human intervention and judgement is need to investigate and interpret the similarity score.

IT Services offers the following interventions to support academics and administrators in managing the use of Turnitin and interpreting the originality reports:

- Support site in WebLearn containing lots of help videos (see ‘Turnitin videos’ and ‘SIPA case studies’ – How an Oxford tutor uses Turnitin via WebLearn): https://weblearn.ox.ac.uk/info/plag

- Courses on using Turnitin (directly or via WebLearn) and interpreting originality reports – see the Home page of the same support site

- Individual consultation: send email to Turnitin@it.ox.ac.uk

- This Turnitin blog to keep up to date: https://blogs.it.ox.ac.uk/tii/

- The mailing list (tii-community@weblearn.ox.ac.uk) in the Turnitin User Group site (https://weblearn.ox.ac.uk/info/plag/tiiug) to share ideas and questions.

Please make use of the available channels for discussion and support.