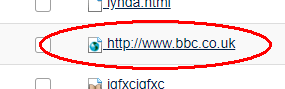

Web Links, which are items in Resources which, when clicked, redirect the user to a specific web page. In general, these are external web sites but could also be links to pages or documents stored in WebLearn.

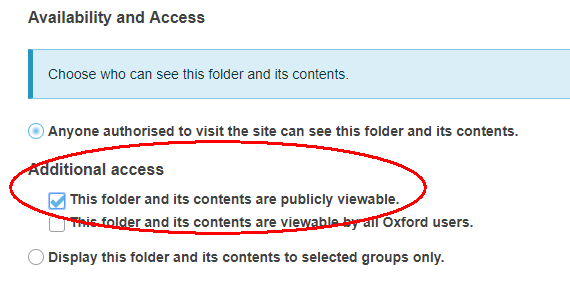

Web Links are not included when exporting the whole site (Site Info > Export Site), however, you can use the following Unix command line script to automatically generate a web page containing all Web Links in a particular site. (You must make sure all folders containing WebLinks (in Resources) are available to the Public. To do this, select Edit Details alongside the desired folders and set to public visibility.)

That script again

#!/bin/sh if [ "$#" -ne 1 ] ; then echo "Usage: weblinks2html WebLearn-URL, for example, weblinks2html https://weblearn.ox.ac.uk/direct/content/site/[[siteid]].json" >&2 else # assumes the web file has public access curl $1 > json cat json | grep -e \\.URL\" -e entityTitle |\ sed -e 's/"entityTitle": "/echo \\\"">/g' -e 's/"$/<\/a><\/li>";/g' |\ sed -e 's/URL"/URL/g' |\ sed 's|^ "url": |echo "<li><a target=\\\"_blank\\\" href\=\\\"" \| tr -d "\\r\\n"; curl -s -D - -o /dev/null |g' |\ sed 's+,$+ | sed -n ZzZzZzZzZzZzs\/^Location: \/\/pZzZzZzZzZzZz | tr -d '\'\\\\r\\\\n\'';+g' | \ sed "s/ZzZzZzZzZzZz/'/g" |\ sed 's/"https/https/g' | \ sed 's|\\/|/|g' > curls; . curls | sed '/^">/d' > weblinks; (echo "<ul>"; cat weblinks; echo "</ul>")

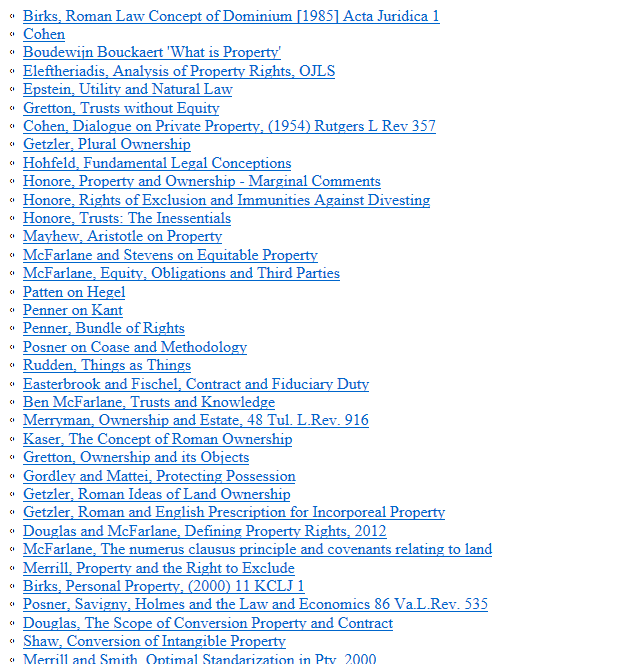

This generates a page containing an HTML list which can be pasted into a WYSIWYG editor on a web page. Here’s an example

Windows 10 users can open a Unix (Linux) window via the Windows Subsystem for Linux facility see: https://docs.microsoft.com/en-us/windows/wsl/install-win10 or use linux.ox.ac.uk which can be accessed using Putty (see: https://www.putty.org/).

Alternatively, send a message to weblearn@it.ox.ac.uk making sure to include the address (URL) of the WebLearn site containing the Web Links and we’ll do it for you.

There is one caveat: only the first 5 levels of nested folders in Resources are exported.